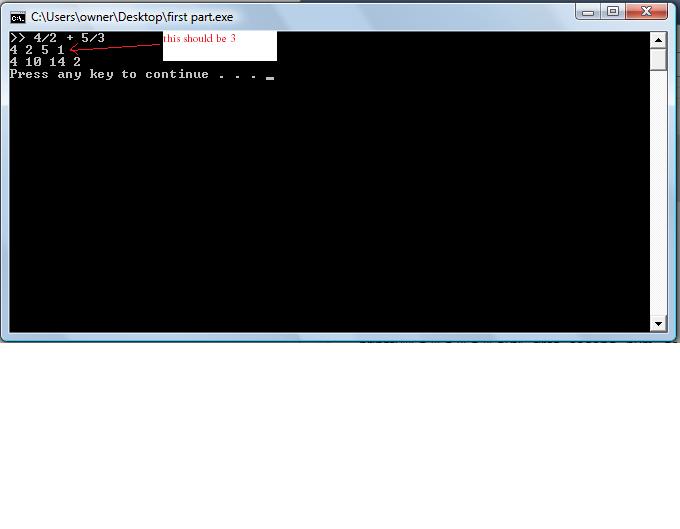

So i wrote out a snippet of my code for a program that is supposed to add and later simplify two fractions. To check if the first part of my program was working, I ran a side program with this code:

I printed out values for a b c and d to make sure the computer was getting the correct values from the user. The user's input is supposed to be in this form: a/b + c/d wherein a b c and d are provided by the user. For some reason, whatever is inputted in the d variable, the program still prints out 1 for the d variable. The other variables are fine. Because the d always comes out as 1, then the corresponding variables that rely on what d is come out wrong.Code:#include <stdio.h> #include <stdlib.h> int main() { int a, b, c, e, num, first, second, den, whole, newnum, t; do { printf(">> "); scanf("%d/%d + %d/%d", &a, &b, &c, &d); if((b==0)||(d=0)) { printf("Invalid input: Denominator cannot be zero!"); } if ((b!=0)&&(d=!0)) { break; } } while((b==0)||(d==0)); if(b<0) { a=-a; b=abs(b); } if(d<0) { c=-c; d=abs(d); } printf("%d %d %d %d\n", a, b, c, d); first=a*d; second=b*c; num=first+second; den=b*d; printf("%d %d %d %d\n", first, second, num, den); return 0; }

Please help! I cant seem to locate the problem. Here is a snippet of what happens when i run this program